Heart.FM, selected for ERC proof-of-concept funding, is an app-creation initiative to deliver tailored music therapy with physiological feedback in cardiovascular disease.

About

The Team

King’s College London : Engineering (NMES) and BMEIS (FoLSM)

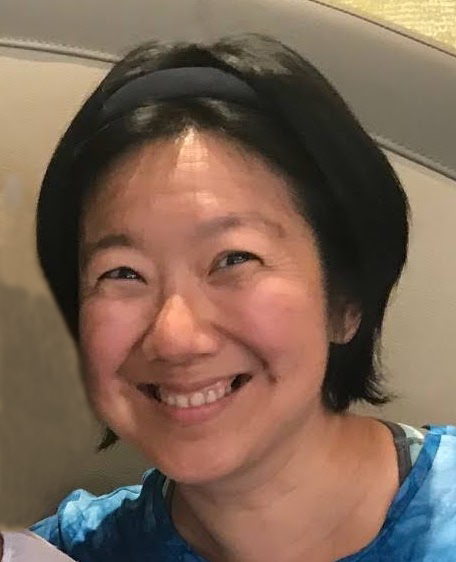

Elaine Chew, PI of the ERC project COSMOS, is Professor of Engineering jointly appointed between the Department of Engineering (NMES) and the School of Biomedical Engineering & Imaging Sciences (FoLSM) at King’s College London. An operations researcher and pianist by training, Elaine Chew is a leading authority in music representation, music information research (MIR), and music perception and cognition, and an established performer. A pioneering researcher in MIR, she is forging new paths at the intersection of music and cardiovascular science. Her work has been recognised by the ERC, PECASE, NSF CAREER, and Harvard Radcliffe Institute for Advanced Study Fellowships. She is an alum (Fellow) of the NAS Kavli and NAE Frontiers of Science/Engineering Symposia. Elaine received PhD and SM degrees in Operations Research at MIT, a BAS in Mathematical & Computational Sciences (honours) and Music (distinction) at Stanford, and FTCL and LTCL diplomas in Piano Performance from Trinity College, London.

Elaine Chew, PI of the ERC project COSMOS, is Professor of Engineering jointly appointed between the Department of Engineering (NMES) and the School of Biomedical Engineering & Imaging Sciences (FoLSM) at King’s College London. An operations researcher and pianist by training, Elaine Chew is a leading authority in music representation, music information research (MIR), and music perception and cognition, and an established performer. A pioneering researcher in MIR, she is forging new paths at the intersection of music and cardiovascular science. Her work has been recognised by the ERC, PECASE, NSF CAREER, and Harvard Radcliffe Institute for Advanced Study Fellowships. She is an alum (Fellow) of the NAS Kavli and NAE Frontiers of Science/Engineering Symposia. Elaine received PhD and SM degrees in Operations Research at MIT, a BAS in Mathematical & Computational Sciences (honours) and Music (distinction) at Stanford, and FTCL and LTCL diplomas in Piano Performance from Trinity College, London.

Natalia Cotic, PhD student (Oct 2023–), is conducting computational analysis of physiological and music signals collected in the HeartFM project to understand the effect of performed and composed music structures on the heart rate variability, respiration, and blood pressure. She holds Bachelor’s and Master’s degrees in Biomedical Engineering from KCL. She was part of a team that won the 1st Prize (Sponsor category) at Imperial College London’s HealthHack for their Android mobile application for preliminary diagnosis of melanomas at 75% accuracy. Her undergraduate and master’s theses with Professor Kawal Rhode focused on evaluation and deployment of real-time denoising convolutional neural networks on images from the catheterisation laboratory.

Natalia Cotic, PhD student (Oct 2023–), is conducting computational analysis of physiological and music signals collected in the HeartFM project to understand the effect of performed and composed music structures on the heart rate variability, respiration, and blood pressure. She holds Bachelor’s and Master’s degrees in Biomedical Engineering from KCL. She was part of a team that won the 1st Prize (Sponsor category) at Imperial College London’s HealthHack for their Android mobile application for preliminary diagnosis of melanomas at 75% accuracy. Her undergraduate and master’s theses with Professor Kawal Rhode focused on evaluation and deployment of real-time denoising convolutional neural networks on images from the catheterisation laboratory.

Poulomi Pal, Postdoctoral Researcher (Mar 2024–), is applying deep learning techniques to cardiovascular variables and music parameters to model the connections between music and heart health. Poulomi completed her PhD in medical signal processing with deep learning from the School of Medical Science and Technology at the Indian Institute of Technology Kharagpur, India. Her expertise involves the use of Machine Learning and Deep Neural Networks for data processing to analyse cardiovascular functional parameters. She has experience acquiring clinical information in hospital environments and working with doctors for cardiac disease diagnosis.

Poulomi Pal, Postdoctoral Researcher (Mar 2024–), is applying deep learning techniques to cardiovascular variables and music parameters to model the connections between music and heart health. Poulomi completed her PhD in medical signal processing with deep learning from the School of Medical Science and Technology at the Indian Institute of Technology Kharagpur, India. Her expertise involves the use of Machine Learning and Deep Neural Networks for data processing to analyse cardiovascular functional parameters. She has experience acquiring clinical information in hospital environments and working with doctors for cardiac disease diagnosis.

Vanessa Pope, Postdoctoral Researcher (Jun 2023–), coordinated the data collection for the HeartFM study and is currently analysing how hypertension impacts responsiveness to music. A theatre director now researching cultural analytics and physiology, she has 10 years’ experience developing interdisciplinary projects across technology and the performing arts. She was a doctoral researcher assisting with our first study on pacemaker patients’ cardiac response to live music. Dr Pope has overseen technology research at BBC R&D, mentored developers in collaboration with Snap Inc and directed shows at the Barbican and Bush Theatre. Dr Pope holds a PhD in Media & Arts Technology from Queen Mary University of London, an MA in Theatre Direction from the University of East Anglia, and a BSc in Psychology from McGill University. She is fluent in English, French and Turkish.

Vanessa Pope, Postdoctoral Researcher (Jun 2023–), coordinated the data collection for the HeartFM study and is currently analysing how hypertension impacts responsiveness to music. A theatre director now researching cultural analytics and physiology, she has 10 years’ experience developing interdisciplinary projects across technology and the performing arts. She was a doctoral researcher assisting with our first study on pacemaker patients’ cardiac response to live music. Dr Pope has overseen technology research at BBC R&D, mentored developers in collaboration with Snap Inc and directed shows at the Barbican and Bush Theatre. Dr Pope holds a PhD in Media & Arts Technology from Queen Mary University of London, an MA in Theatre Direction from the University of East Anglia, and a BSc in Psychology from McGill University. She is fluent in English, French and Turkish.

Courtney Reed, Postdoctoral Researcher (Jan-Oct 2023), is researching human perception and interaction with music and physiology. Courtney’s specialisations are in human-computer interaction (HCI) and data presentation, particularly with biosignals like EMG, human-centred design, and first-person subjective methodologies, including micro-phenomenology. Courtney completed her PhD in Computer Science with the Augmented Instruments Lab at the Centre for Digital Music, Queen Mary University of London. Her thesis examined the embodied relationship between vocalist and voice, as both instrument and body, and how musical relationships can inform HCI and design strategies. She previously worked as a researcher in the Sensorimotor Interaction Group at the Max Planck Institute for Informatics, where she is an affiliate researcher. Her work there examined human perception of vibrotactile feedback and developed protoypes and toolkits for designers to incorporate auditory and tactile feedback in interface design. In addition to her research, Courtney works as a semi-professional vocalist in London and abroad. [ Update (2024): Courtney is now a Lecturer at Loughborough University London ]

Courtney Reed, Postdoctoral Researcher (Jan-Oct 2023), is researching human perception and interaction with music and physiology. Courtney’s specialisations are in human-computer interaction (HCI) and data presentation, particularly with biosignals like EMG, human-centred design, and first-person subjective methodologies, including micro-phenomenology. Courtney completed her PhD in Computer Science with the Augmented Instruments Lab at the Centre for Digital Music, Queen Mary University of London. Her thesis examined the embodied relationship between vocalist and voice, as both instrument and body, and how musical relationships can inform HCI and design strategies. She previously worked as a researcher in the Sensorimotor Interaction Group at the Max Planck Institute for Informatics, where she is an affiliate researcher. Her work there examined human perception of vibrotactile feedback and developed protoypes and toolkits for designers to incorporate auditory and tactile feedback in interface design. In addition to her research, Courtney works as a semi-professional vocalist in London and abroad. [ Update (2024): Courtney is now a Lecturer at Loughborough University London ]

Mateusz Solinski, Postdoctoral Researcher (Nov 2022–), is researching the effects of expressive music parameters on autonomic responses and investigating the connections between the perception of music and physiology. Mateusz received his PhD in Physical Sciences at Warsaw University of Technology, Faculty of Physics, Cardiovascular Physics Group. At the same time, he gained experience as Data Scientist in medical companies and start-ups (Medicalgorithmics, AioCare) involved in the development of medical devices and telemedicine systems. He participated in multiple commercial projects, clinical trials and challenges concerning the analysis of multiple electrophysiological signals, particularly ECG and heart rate variability. His PhD thesis focused on the influence of short-term changes in the persistence of the RR time interval series on heart rate variability during sleep.

Mateusz Solinski, Postdoctoral Researcher (Nov 2022–), is researching the effects of expressive music parameters on autonomic responses and investigating the connections between the perception of music and physiology. Mateusz received his PhD in Physical Sciences at Warsaw University of Technology, Faculty of Physics, Cardiovascular Physics Group. At the same time, he gained experience as Data Scientist in medical companies and start-ups (Medicalgorithmics, AioCare) involved in the development of medical devices and telemedicine systems. He participated in multiple commercial projects, clinical trials and challenges concerning the analysis of multiple electrophysiological signals, particularly ECG and heart rate variability. His PhD thesis focused on the influence of short-term changes in the persistence of the RR time interval series on heart rate variability during sleep.

Katherine Kinnaird, Visiting Senior Lecturer (Feb 2023–Jul 2023), works at the intersection of machine learning and cultural analytics, specifically concerning music information retrieval and text analysis. Broadly, she researches the dimension reduction problem, representing high-dimensional and noisy sequential data as a low-dimensional object that encodes relevant information. Katherine earned her PhD at Dartmouth College (Hanover NH, USA) in mathematics and proposed the Aligned-Hierarchies for representing all possible musical structure hierarchies aligned on a common-time axis. Currently she is the Clare Boothe Luce Assistant Professor of Computer Science and Statistical & Data Sciences at Smith College (Northampton MA, USA). During her postdoctoral work at Brown University (Providence RI, USA), she worked with two different faculty members on projects related to health care, and she is looking forward to working with the COSMOS project on statistical and data analyses of music and physiological (cardiovascular) data.

Katherine Kinnaird, Visiting Senior Lecturer (Feb 2023–Jul 2023), works at the intersection of machine learning and cultural analytics, specifically concerning music information retrieval and text analysis. Broadly, she researches the dimension reduction problem, representing high-dimensional and noisy sequential data as a low-dimensional object that encodes relevant information. Katherine earned her PhD at Dartmouth College (Hanover NH, USA) in mathematics and proposed the Aligned-Hierarchies for representing all possible musical structure hierarchies aligned on a common-time axis. Currently she is the Clare Boothe Luce Assistant Professor of Computer Science and Statistical & Data Sciences at Smith College (Northampton MA, USA). During her postdoctoral work at Brown University (Providence RI, USA), she worked with two different faculty members on projects related to health care, and she is looking forward to working with the COSMOS project on statistical and data analyses of music and physiological (cardiovascular) data.

CNRS – UMR9912 / STMS, IRCAM, Sorbonne, Ministère de la Culture

Daniel Bedoya, PhD student (Oct 2019–Oct 2023), designs citizen science experiments to help understand musical structures created in performance, and analyzes the perception of musical structures in performed music and physiological responses to these performed structures. He has an undergraduate degree in Sound Engineering (UDLA Quito-Ecuador) and a Master’s degree in Computer Science, Acoustics and Signal Processing Applied to Music (ATIAM – IRCAM-Sorbonne Université). Previously, he was a research assistant with Jean-Julien Aucouturier in the Perception and Sound Design (PDS) Team on the relationship of music and emotions in the ERC project CREAM and explored the influence of smiled speech in dyadic interactions in the REFLETS project. [ Update (2024): Daniel is an ATER at the Conservatoire national des arts et métiers (CNAM) Structural Mechanics and Coupled Systems Lab (LMSSC) in Paris ]

Daniel Bedoya, PhD student (Oct 2019–Oct 2023), designs citizen science experiments to help understand musical structures created in performance, and analyzes the perception of musical structures in performed music and physiological responses to these performed structures. He has an undergraduate degree in Sound Engineering (UDLA Quito-Ecuador) and a Master’s degree in Computer Science, Acoustics and Signal Processing Applied to Music (ATIAM – IRCAM-Sorbonne Université). Previously, he was a research assistant with Jean-Julien Aucouturier in the Perception and Sound Design (PDS) Team on the relationship of music and emotions in the ERC project CREAM and explored the influence of smiled speech in dyadic interactions in the REFLETS project. [ Update (2024): Daniel is an ATER at the Conservatoire national des arts et métiers (CNAM) Structural Mechanics and Coupled Systems Lab (LMSSC) in Paris ]

Emma Frid, Postdoctoral Fellow (Sep 2020–Sep 2023), is a Swedish Research Council International Postdoctoral Scholarship recipient hosted by the COSMOS project at the STMS Laboratory. Emma’s project is titled Accessible digital musical instruments – Multimodal feedback and artificial intelligence for improved musical frontiers for people with disabilities, in the “Medical technology, other medicine and health care” category. The scholarship is administered by the KTH Royal Institute of Technology in Stockholm. Emma received her PhD in January 2020 from KTH, in Sound and Music Computing from the Division of Media Technology and Interaction Design. Her PhD thesis, entitled “Diverse Sounds – Enabling Inclusive Sonic Interaction,” focused on how Sonic Interaction Design can be used to promote inclusion and diversity in music-making.

Emma Frid, Postdoctoral Fellow (Sep 2020–Sep 2023), is a Swedish Research Council International Postdoctoral Scholarship recipient hosted by the COSMOS project at the STMS Laboratory. Emma’s project is titled Accessible digital musical instruments – Multimodal feedback and artificial intelligence for improved musical frontiers for people with disabilities, in the “Medical technology, other medicine and health care” category. The scholarship is administered by the KTH Royal Institute of Technology in Stockholm. Emma received her PhD in January 2020 from KTH, in Sound and Music Computing from the Division of Media Technology and Interaction Design. Her PhD thesis, entitled “Diverse Sounds – Enabling Inclusive Sonic Interaction,” focused on how Sonic Interaction Design can be used to promote inclusion and diversity in music-making.

Lawrence Fyfe, Research Engineer (Oct 2019–), is creating web-based visualisation software and database infrastructure to harness volunteer thinking in the project’s citizen science modules. Lawrence received his PhD in Computational Media Design from the University of Calgary and a Master’s degree in Music, Science and Technology from the Centre for Computer Research in Music and Acoustics (CCRMA) at Stanford University. Before joining the COSMOS project, he worked on a binaural telepresence system for the Digiscope project at INRIA. The Digiscope project connected various visualisation labs around Paris via telepresence (audio and video conferencing) to facilitate collaboration. Before that, Lawrence developed a web site for listening to sonified EEG data, which was used to facilitate the diagnosis of epileptic seizures.

Lawrence Fyfe, Research Engineer (Oct 2019–), is creating web-based visualisation software and database infrastructure to harness volunteer thinking in the project’s citizen science modules. Lawrence received his PhD in Computational Media Design from the University of Calgary and a Master’s degree in Music, Science and Technology from the Centre for Computer Research in Music and Acoustics (CCRMA) at Stanford University. Before joining the COSMOS project, he worked on a binaural telepresence system for the Digiscope project at INRIA. The Digiscope project connected various visualisation labs around Paris via telepresence (audio and video conferencing) to facilitate collaboration. Before that, Lawrence developed a web site for listening to sonified EEG data, which was used to facilitate the diagnosis of epileptic seizures.

Emily Graber, Postdoctoral Fellow (Aug 2021–Aug 2023), is a Marie Skłodowska-Curie Fellow whose Ear Stretch project investigates the role of active tempo control in augmenting enjoyment of contemporary music as measured by physiological monitoring. After studying violin performance at the University of Michigan, Emily received her PhD at Stanford’s Center for Computer Research in Music and Acoustics in 2018. Her doctoral research with Takako Fujioka focused on how performers and listeners anticipate and experience musical tempo changes. Her dissertation, “Neural Correlates of Top-Down Musical Temporal Processing,” examined the process of temporal anticipation with neuroimaging. Following her PhD, Emily was a postdoctoral fellow at the Sunnybrook Research Institute in Toronto, where she examined how interactive musical training assists in rehabilitating speech processing in deaf adults with cochlear implants. [ Update (2024): Emily is an Assistant Professor in Computer and Information Science at Allegheny College in Pennsylvania ]

Emily Graber, Postdoctoral Fellow (Aug 2021–Aug 2023), is a Marie Skłodowska-Curie Fellow whose Ear Stretch project investigates the role of active tempo control in augmenting enjoyment of contemporary music as measured by physiological monitoring. After studying violin performance at the University of Michigan, Emily received her PhD at Stanford’s Center for Computer Research in Music and Acoustics in 2018. Her doctoral research with Takako Fujioka focused on how performers and listeners anticipate and experience musical tempo changes. Her dissertation, “Neural Correlates of Top-Down Musical Temporal Processing,” examined the process of temporal anticipation with neuroimaging. Following her PhD, Emily was a postdoctoral fellow at the Sunnybrook Research Institute in Toronto, where she examined how interactive musical training assists in rehabilitating speech processing in deaf adults with cochlear implants. [ Update (2024): Emily is an Assistant Professor in Computer and Information Science at Allegheny College in Pennsylvania ]

Corentin Guichaoua, Postdoctoral Researcher (Dec 2019–Jan 2022), is researching mathematical and computational techniques for automatic extraction of musical structure in performed music and cardiac signals. Previously, he was a postdoc with Moreno Andreatta at the University of Strasbourg in the SMIR project, where he implemented algebraic and topologic methods for systematic analysis and comparison of pieces of music. He holds PhD and Master’s degrees in Computer Science from the University of Rennes 1, and a concurrent Masters of Science and Engineering (Diplôme d’Ingénieur) from INSA (Institut national des sciences appliquées). His doctoral thesis, supervised by Frédéric Bimbot, focused on compressed descriptions of chord sequences from pieces of music using formal models, in order to extract information on their structure.

Corentin Guichaoua, Postdoctoral Researcher (Dec 2019–Jan 2022), is researching mathematical and computational techniques for automatic extraction of musical structure in performed music and cardiac signals. Previously, he was a postdoc with Moreno Andreatta at the University of Strasbourg in the SMIR project, where he implemented algebraic and topologic methods for systematic analysis and comparison of pieces of music. He holds PhD and Master’s degrees in Computer Science from the University of Rennes 1, and a concurrent Masters of Science and Engineering (Diplôme d’Ingénieur) from INSA (Institut national des sciences appliquées). His doctoral thesis, supervised by Frédéric Bimbot, focused on compressed descriptions of chord sequences from pieces of music using formal models, in order to extract information on their structure.

Paul Lascabettes, PhD student (Oct 2020–Nov 2023), is an ENS (Ecole normale supérieure Paris-Saclay) CDSN scholarship student hosted by the ERC ADG project COSMOS who recently joined the team. Paul completed a Masters in the ATIAM (IRCAM’s Masters degree in Acoustics, Signal Processing, and Computer Science Applied to Music) Program. As part of his Mathematics studies at the ENS Paris-Saclay, he recently concluded a year-long research exchange at the MARC Institute for Brain, Behaviour, and Development in the Western Sydney University in Australia, where he worked on computational pattern detection for the analysis of the fugues of Bach’s Well-Tempered Clavier with Andrew Milne and David Bulger.

Paul Lascabettes, PhD student (Oct 2020–Nov 2023), is an ENS (Ecole normale supérieure Paris-Saclay) CDSN scholarship student hosted by the ERC ADG project COSMOS who recently joined the team. Paul completed a Masters in the ATIAM (IRCAM’s Masters degree in Acoustics, Signal Processing, and Computer Science Applied to Music) Program. As part of his Mathematics studies at the ENS Paris-Saclay, he recently concluded a year-long research exchange at the MARC Institute for Brain, Behaviour, and Development in the Western Sydney University in Australia, where he worked on computational pattern detection for the analysis of the fugues of Bach’s Well-Tempered Clavier with Andrew Milne and David Bulger.

Charles Picasso, Heart.FM Engineer (Nov 2020–Apr 2022), is responsible for creating an app to deliver personalized music therapy to lower blood pressure based on physiological feedback. He has previously spent nine years at IRCAM working as a software engineer and contributed to OpenSource projects such as SuperCollider. Picasso is also an electronic music composer and sound designer. Classically trained as a musician, he started early as an electronic music producer and work as a composer for theatre companies, documentaries, films and exhibitions. His artistic work focus on abstract electronic soundscapes and is inspired by generative and experimental processes. His music is often depicted as contemplative and melancholic.

Charles Picasso, Heart.FM Engineer (Nov 2020–Apr 2022), is responsible for creating an app to deliver personalized music therapy to lower blood pressure based on physiological feedback. He has previously spent nine years at IRCAM working as a software engineer and contributed to OpenSource projects such as SuperCollider. Picasso is also an electronic music composer and sound designer. Classically trained as a musician, he started early as an electronic music producer and work as a composer for theatre companies, documentaries, films and exhibitions. His artistic work focus on abstract electronic soundscapes and is inspired by generative and experimental processes. His music is often depicted as contemplative and melancholic.

Gonzalo Romero, ATIAM (IRCAM’s Masters degree in Acoustics, Signal Processing, and Computer Science Applied to Music) intern (Feb 2020–Sep 2020), is developing scalable algorithms for the automatic transcription of rhythmic variations, and applying the computational techniques to create symbolic representations of long arrhythmia ECG sequences for structural analysis. He received a Masters in Fundamental Mathematics at the Sorbonne University, and a Bachelor’s degree in Mathematics from Complutense University in Madrid, and a Bachelor’s degree in Composition from the Madrid Royal Conservatory (Real Conservatorio Superior de Música de Madrid). Gonzalo hails from a musical family and plays the violin and piano.

Gonzalo Romero, ATIAM (IRCAM’s Masters degree in Acoustics, Signal Processing, and Computer Science Applied to Music) intern (Feb 2020–Sep 2020), is developing scalable algorithms for the automatic transcription of rhythmic variations, and applying the computational techniques to create symbolic representations of long arrhythmia ECG sequences for structural analysis. He received a Masters in Fundamental Mathematics at the Sorbonne University, and a Bachelor’s degree in Mathematics from Complutense University in Madrid, and a Bachelor’s degree in Composition from the Madrid Royal Conservatory (Real Conservatorio Superior de Música de Madrid). Gonzalo hails from a musical family and plays the violin and piano.

Medical Collaborators

Pier Lambiase is Professor of Cardiology at University College London and leads the Inherited Arrhythmia Service at Barts Heart Centre as well as the Translational Electrophysiology Research Group. He has published widely on arrhythmia mechanisms in specific inherited conditions and co-authored/advised national & international guidelines on genetic diagnosis as well as sudden death prevention in inherited ion channel disorders (JACC 2015-2016, ESC 2021). He described the arrhythmogenic phenotypes in Brugada Syndrome (Circulation 2009) and ARVC (Eur Heart Journal 2012). He has published 350 peer review papers with an H index of 60 and raised £7M in grants from BHF, Wellcome Trust, and MRC over the past 5 years and was awarded the BCS Michael Davies early career research award in 2015. He leads a biomedical engineering group to utilise high density electrical mapping data to enable deep phenotyping of specific gene gene mutation carriers as well as UK Biobank population data combining genomic and physiological parameters (MRC project grant). He currently chairs the British Heart Rhythm Society Multicentre Trials group and was awarded £1.6M by the BHF for the CRAAFT-HF trial of atrial fibrillation in heart failure.

Pier Lambiase is Professor of Cardiology at University College London and leads the Inherited Arrhythmia Service at Barts Heart Centre as well as the Translational Electrophysiology Research Group. He has published widely on arrhythmia mechanisms in specific inherited conditions and co-authored/advised national & international guidelines on genetic diagnosis as well as sudden death prevention in inherited ion channel disorders (JACC 2015-2016, ESC 2021). He described the arrhythmogenic phenotypes in Brugada Syndrome (Circulation 2009) and ARVC (Eur Heart Journal 2012). He has published 350 peer review papers with an H index of 60 and raised £7M in grants from BHF, Wellcome Trust, and MRC over the past 5 years and was awarded the BCS Michael Davies early career research award in 2015. He leads a biomedical engineering group to utilise high density electrical mapping data to enable deep phenotyping of specific gene gene mutation carriers as well as UK Biobank population data combining genomic and physiological parameters (MRC project grant). He currently chairs the British Heart Rhythm Society Multicentre Trials group and was awarded £1.6M by the BHF for the CRAAFT-HF trial of atrial fibrillation in heart failure.

Peter Taggart is Professor Emeritus of Cardiac Electrophysiology at University College London. He has been affiliated with the Department of Cardiology at Guy’s and St. Thomas’s Hospital and Department of Cardiovascular Imaging in the then Division of Imaging Sciences and Biomedical Engineering at King’s College, London, Department of Mechanical Engineering at University College London, and the Neurocardiology Unit at the University College London Hospitals.

Peter Taggart is Professor Emeritus of Cardiac Electrophysiology at University College London. He has been affiliated with the Department of Cardiology at Guy’s and St. Thomas’s Hospital and Department of Cardiovascular Imaging in the then Division of Imaging Sciences and Biomedical Engineering at King’s College, London, Department of Mechanical Engineering at University College London, and the Neurocardiology Unit at the University College London Hospitals.

Michele Orini received an M.Sc. degree in biomedical engineering from the Politecnico di Milano, Italy, M.Eng. degree from the École Centrale Paris, France, in 2006, and the joint Ph.D. degree from the University of Zaragoza, Spain, and the Politecnico di Milano, Italy, in 2012. He is currently a Senior Research Fellow at University College London, where he has made important contributions to data science applied to the understanding and treatment of cardiovascular disease.

Michele Orini received an M.Sc. degree in biomedical engineering from the Politecnico di Milano, Italy, M.Eng. degree from the École Centrale Paris, France, in 2006, and the joint Ph.D. degree from the University of Zaragoza, Spain, and the Politecnico di Milano, Italy, in 2012. He is currently a Senior Research Fellow at University College London, where he has made important contributions to data science applied to the understanding and treatment of cardiovascular disease.

Phil Chowienczyk is Professor of Clinical Cardiovascular Pharmacology in the School of Cardiovascular Medicine & Sciences at King’s College London, where he is affiliated with the British Heart Foundation Centre. He is also an Honorary Consultant Physician and Director of the Clinical Research Facilities at Guy’s and St Thomas’ NHS Foundation Trust Hospital. His research focuses on the in vivo assessment of cardiovascular structure and function in humans with the aim of elucidating mechanisms leading to arterial disease and interventions to prevent/treat arterial disease. He currently leads a programme on stratified mechanisms in hypertension. He studied physics at Bristol University in 1975-1978, and worked in biomedical engineering before studying medicine in 1982-1988 at Guy’s Hospital Medical School. He retains an interest in biomedical engineering in relation to non-invasive assessment of cardiovascular function.

Phil Chowienczyk is Professor of Clinical Cardiovascular Pharmacology in the School of Cardiovascular Medicine & Sciences at King’s College London, where he is affiliated with the British Heart Foundation Centre. He is also an Honorary Consultant Physician and Director of the Clinical Research Facilities at Guy’s and St Thomas’ NHS Foundation Trust Hospital. His research focuses on the in vivo assessment of cardiovascular structure and function in humans with the aim of elucidating mechanisms leading to arterial disease and interventions to prevent/treat arterial disease. He currently leads a programme on stratified mechanisms in hypertension. He studied physics at Bristol University in 1975-1978, and worked in biomedical engineering before studying medicine in 1982-1988 at Guy’s Hospital Medical School. He retains an interest in biomedical engineering in relation to non-invasive assessment of cardiovascular function.

Sally Brett studied Nursing at the University of Glasgow before completing a PhD on the influence of cardiovascular risk factors on exercise blood pressure at King’s College London in 2001. She is a Senior Research Fellow at the School of Cardiovascular Medicine and Sciences, King’s College London, with an interest in the performance of novel imaging biomarkers in predicting progression of heart failure and clinical outcomes in patients with hypertension and heart failure.

Sally Brett studied Nursing at the University of Glasgow before completing a PhD on the influence of cardiovascular risk factors on exercise blood pressure at King’s College London in 2001. She is a Senior Research Fellow at the School of Cardiovascular Medicine and Sciences, King’s College London, with an interest in the performance of novel imaging biomarkers in predicting progression of heart failure and clinical outcomes in patients with hypertension and heart failure.

Jaswinder Gill is a Consultant at the School of Biomedical Engineering and Imaging Sciences. He qualified from Cambridge University in 1979 and was appointed Consultant Cardiologist to Guy’s & St Thomas’ NHS Trust in 1995. Dr Gill set up the electrophysiology unit and arrhythmia services for Guy’s & St Thomas’ NHS Trust. His special interests are in the treatment of arrhythmias including radio frequency ablation and the implantation of pacemakers and defibrillators.

Jaswinder Gill is a Consultant at the School of Biomedical Engineering and Imaging Sciences. He qualified from Cambridge University in 1979 and was appointed Consultant Cardiologist to Guy’s & St Thomas’ NHS Trust in 1995. Dr Gill set up the electrophysiology unit and arrhythmia services for Guy’s & St Thomas’ NHS Trust. His special interests are in the treatment of arrhythmias including radio frequency ablation and the implantation of pacemakers and defibrillators.

Nilanka Mannakkara is a Cardiology Registrar and Clinical Research Fellow in Cardiac Imaging and Electrophysiology at King’s College London and St. Thomas’ Hospital, London. He graduated from the University College London Medical School in 2012 and has undertaken clinical cardiology training since 2016 in London. His research and clinical interests are in cardiac devices, electrophysiology and cardiac MRI imaging.

Nilanka Mannakkara is a Cardiology Registrar and Clinical Research Fellow in Cardiac Imaging and Electrophysiology at King’s College London and St. Thomas’ Hospital, London. He graduated from the University College London Medical School in 2012 and has undertaken clinical cardiology training since 2016 in London. His research and clinical interests are in cardiac devices, electrophysiology and cardiac MRI imaging.

Music Collaborators

Ian Pressland studied cello with Elizabeth Braddock, Joseph Koos, Florence Hooton, Colin Walker and Donald Mcall. At Trinity College of Music London he won the Sonata, Louise Bande and Sir John Barbirolli prizes for cello. Following membership of the BBC Concert Orchestra he joined the Rasumovsky String Quartet, coached at and became Assistant Director of Pro Corda (The National Association for Young Chamber Music Players). Ian continues to perform, teach, conduct and coach in many musical arenas including the East London Late Starters Orchestra (Saturday morning school), The Chamber Orchestra (Clerkenwell), Stoneleigh Youth Orchestras (Wimbledon) and The Royal College of Music junior dept.

Ian Pressland studied cello with Elizabeth Braddock, Joseph Koos, Florence Hooton, Colin Walker and Donald Mcall. At Trinity College of Music London he won the Sonata, Louise Bande and Sir John Barbirolli prizes for cello. Following membership of the BBC Concert Orchestra he joined the Rasumovsky String Quartet, coached at and became Assistant Director of Pro Corda (The National Association for Young Chamber Music Players). Ian continues to perform, teach, conduct and coach in many musical arenas including the East London Late Starters Orchestra (Saturday morning school), The Chamber Orchestra (Clerkenwell), Stoneleigh Youth Orchestras (Wimbledon) and The Royal College of Music junior dept.

Hilary Sturt studied the violin with Shiela Nelson, David Takeno, and Felix Andrievsky, graduating from the Guildhall School of Music and the Royal College of Music with solo, chamber and contemporary music prizes. As a violinist and violist she performed and recorded worldwide with Ensemble Modern for 20 years, including notable projects with Frank Zappa, Peter Eotvos and Pierre Boulez. She has been guest leader of many British ensembles and chamber orchestras, a member of the Rasumovsky Quartet and Apartment House, winners of the Philharmonic Society Award for the Most Outstanding Chamber Music in 2011. Hilary is much in demand as a teacher, adjudicator, and conductor, sitting on many audition and interview panels throughout the UK. She is Head of Strings at St Paul’s Girls’ School, Head of Chamber Music at the Junior Department of the Royal College of Music, Instrumental Teaching Tutor and mentor to the MA Ed course at the Senior RCM, and was a Diploma examiner for the ABRSM. She was awarded an MA from the University College London in 2018. Hilary recorded the violin syllabus Grades 1-4 for the ABRSM in 2015 and was on the advisory panel for the 2020 ABRSM violin syllabus.

Hilary Sturt studied the violin with Shiela Nelson, David Takeno, and Felix Andrievsky, graduating from the Guildhall School of Music and the Royal College of Music with solo, chamber and contemporary music prizes. As a violinist and violist she performed and recorded worldwide with Ensemble Modern for 20 years, including notable projects with Frank Zappa, Peter Eotvos and Pierre Boulez. She has been guest leader of many British ensembles and chamber orchestras, a member of the Rasumovsky Quartet and Apartment House, winners of the Philharmonic Society Award for the Most Outstanding Chamber Music in 2011. Hilary is much in demand as a teacher, adjudicator, and conductor, sitting on many audition and interview panels throughout the UK. She is Head of Strings at St Paul’s Girls’ School, Head of Chamber Music at the Junior Department of the Royal College of Music, Instrumental Teaching Tutor and mentor to the MA Ed course at the Senior RCM, and was a Diploma examiner for the ABRSM. She was awarded an MA from the University College London in 2018. Hilary recorded the violin syllabus Grades 1-4 for the ABRSM in 2015 and was on the advisory panel for the 2020 ABRSM violin syllabus.

ERC ADG Project

COSMOS: Computational Shaping and Modeling of Musical Structures (Principal Investigator: Elaine Chew) is a European Research Council Advanced Grant (AdG) project supported by the European Union’s Horizon 2020 research and innovation program under grant agreement No. 788960. COSMOS aims to use data science, optimization / data analytics, and citizen science to study musical structures as they are created in music performances and in unusual sources such as cardiac arrhythmias.

The project is hosted by the Centre National de la Recherche Scientifique (CNRS) at the Sciences et Technologies de la Musique et du Son (STMS) Laboratory, a joint research unit (UMR9912) of the CNRS, the Institut de Recherche et Coordination Acoustique/Musique (IRCAM), Sorbonne University, and the French Ministry of Culture. STMS is located at IRCAM, in the heart of Paris.

The project summary is given below and on CORDIS – Grant agreement ID: 788960.

| Objective: Music performance is considered by many to be one of the most breath taking feats of human intelligence. That music performance is a creative act is no longer a disputed fact, but the very nature of this creative work remains illusive. Taking the view that the creative work of performance is the making and shaping of music structures, and that this creative thinking is a form of problem solving, COSMOS proposes an integrated programme of research to transform our understanding of the human experience of performed music, which is almost all music that we hear, and of the creativity of music performance, which addresses how music is made. The research themes are as follows: i) to find new ways to represent, explore, and talk about performance; ii) to harness volunteer thinking (citizen science) for music performance research by focussing on structures experienced and problem solving; iii) to create sandbox environments to experiment with making performed structures; iv) to create theoretical frameworks to discover the reasoning behind the structures perceived and made; and, v) to foster community engagement by training experts to provide feedback on structure solutions so as to increase public understanding of the creative work in music performance. Analysis of the perceived and designed structures will be based on a novel duality paradigm that turns conventional computational music structure analysis on its head to reverse engineer why a perceiver or a performer chooses a particular structure. Embedded in the approach is the use of computational thinking to optimise representations and theories to ensure accuracy, robustness, efficiency, and scalability. The PI is an established performer and a leading authority in music representation, music information research, and music perception and cognition. The project will have far reaching impact, reconfiguring expert and public views of music performance and time-varying music-like sequences such as cardiac arrhythmia. |

[ About the ERC | About ERC Advanced Grants ]